Spliced feed for Security Bloggers Network |

| Embedded Webapp Fun: NetScreen [HiR Information Report] Posted: 18 Oct 2008 03:20 AM CDT I like to tunnel SSH, whether it's for getting around a captive portal at an airport or for encrypting your traffic at DefCon. At home, I use an old NetScreen1 5XP-Elite firewall. The "Elite" has absolutely nothing to do with its mad $k1llz. It just means it's got an unlimited network license, which is good because I have a lot of freaking computers in the Lab-O-Ratory. I wanted to run SSH over port 53 (DNS) as well as 22 to aid in tunneling SSH where external DNS is allowed (more often than you'd think) but I got this error via the GUI:  On the CLI, I was met with this: ns5-> set vip untrust-ip 53 SSH 192.168.0.56Well... CRAP! I don't usually take "no" for an answer too easily. Some javascript told me I couldn't do it. Some little parameter within the CLI told me the same thing. I have a pretty hard time believing that the kernel of my firewall is incapable of spawning an SSH forward on port 53 when port 22 or 31337 would work just fine. Skepticism of limitations is one of the fundamental elements of having the security mindset. I poked through the web-page source code that renders the page, but it's all on-the-fly javascript. I decided that if I wanted to see what the form post was passing, it would be easier to sniff the session. I went ahead and fired up my old friend, Wireshark.2 Notice that the web admin interface is only running on the internal interface, so I'm tunneling port 80 direct to it through one of my systems on the inside, hence the localhost:80 http session. I opted to redirect port 2201 to the internal SSH box, since it's well within the range of acceptable ports. I did a "Follow TCP Stream" and VOILA!  This gives me everything I need to make a quick HTML file that posts whatever port number I want as the "port" variable. I decided to take the easy way out and see if NetScreen's web interface would accept the variables as a GET, by pasting it into the location bar instead, just to see. I broke it up into three lines here, but you can see the only thing I changed was "2201" to "53". http://localhost/n_vip_l.html? port=53&srv_vip_l=37&to_ip=192.168.0.56& confirm=++++OK++++&idx=-1&p_port=-1 Success!  What's the point? This was a practical example for how to putz around with stuff you may already have and dabble in the fascinating world of web application security. As mentioned before, it's also an exercise in thinking beyond limitations. NetScreen was founded by a bunch of ex-Cisco guys and the IOS-esque "ScreenOS" as it's called really shows it. In 2004, NetScreen was snarfed up by Juniper Networks. Juniper's low-end SSG-5 is about the closest living relative to my beloved yet crufty home firewall. On an odd side-note, Frogman and I got to see Gerald Combs (creator of the project now called Wireshark) give one of his very, very rare talks on TCP/IP, which included a lot of Ethereal's backstory. |

| Governator Vetoes Bill [TriGeoSphere] Posted: 18 Oct 2008 02:27 AM CDT

California’s Governor, Arnold Schwarzenegger, vetoed the state legislator’s second attempt to pass a Consumer Data Protection Act. While the new bill softened some provisions found in the original, such as the requirement that a breached organization reimburse financial institutions for the cost of replacing credit cards, it remained a flawed bill in many respects. California’s Governor, Arnold Schwarzenegger, vetoed the state legislator’s second attempt to pass a Consumer Data Protection Act. While the new bill softened some provisions found in the original, such as the requirement that a breached organization reimburse financial institutions for the cost of replacing credit cards, it remained a flawed bill in many respects.By vetoing the bill, the Governor once again concluded that adequate protection already exists. Schwarzenegger wrote, “As I stated in last year’s veto of a similar bill, this bill attempts to legislate in an area where the marketplace has already assigned responsibilities and liabilities that provide for the protection of consumers.” I had a chance to talk about the proposed legislation last month. During the discussion, I expressed my hope that the Governor would again veto the bill because I saw it as an inadequate attempt to define appropriate data handling requirements with only one possible outcome…litigation. The bill meant well, but falls short of providing any significant new value and includes minimal guidance on how to minimize the potential loss of data. Its technical focus is limited to storage and transmission suggesting that businesses: These aren’t unreasonable requests… Inappropriate customer data storage and transmission have been the leading culprits in several recent breaches. Unfortunately, storage and transmission breaches are only the tip of the iceberg. Businesses continue to lose sensitive data just through wireless access points, weak passwords, weak encryption, vendor default or contractor passwords, systems compromised by key loggers, trojans and more. Plain and simple: If a business handles a meaningful volume of credit card data, there is a high probability someone is looking for a way to get it. Considering all the risks, and the reality that security can be expensive, don't we need legislation? Perhaps… but not this legislation. It didn't highlight many of the possible attack vectors and PCI already enforces everything the proposed legislation would offer. Given the California bill’s shortcomings, I wonder who the target audience was for the bill. Were they serious about requiring businesses to protect the data, or was their agenda focused on generating evidence to assign blame? Clearly, the most meaningful consumer data protection comes from taking responsible and prudent steps to prevent data loss. Even under the best of circumstances, no one can guarantee that a loss will never occur and that’s where California led the way in disclosure legislation. In my opinion, this legislation was ill-conceived and I hope it won’t be back. What do you think? |

| Posted: 18 Oct 2008 12:35 AM CDT I just love this story! Not at all certain I would dare to try it myself. Reading how Schneier uses fake boarding passes, and brings 24oz of not identified liquid through the airport security is like reading a Ken Follet novel! And you all know what I think of airport security! |

| IRS Computers Full of Security Holes [The IT Security Guy] Posted: 17 Oct 2008 09:17 PM CDT The IRS has sensitive data about 130 million people filing tax returns. But their computer systems storing that data have inadequate security controls, according to a study by the Treasury Inspector General for Tax Administration in a report released in September. The security issues run the gamut from inadequate access controls, lack of auditing of privileged users and weak application security. The study focused on the Customer Account Date Engine (CADE, for you acronym junkies who aren't US government employees), which is meant to streamline access to taxpayer data. I guess now that would also streamline access for hackers, as well. The IRS was aware of the issues but didn't think they were important. Now, they do, and have agreed to work with the Inspector General's office to fix the vulnerabilities, the report says. |

| An interesting (to me) discovery... [PaulDotCom] Posted: 17 Oct 2008 03:26 PM CDT For you listeners of the podcast, you may be aware that I'm doing some research into utilizing document metadata, and the inferences that an attacker can make about the victims overall operating environment. This analysis would allow an attacker to be able to deliver more accurate, targeted attacks against a victim. One of the tools that I've been using is Exiftool, which at it's core, is an over driven command line front end to all of the options of libexif. The other day I discovered an advisory about a integer-overflow Time to proceed my analysis with more caution. That said, you should always be analyzing unknown, untrusted files in a protected environment. Maybe the environment is an air-gapped machine, or a VM with no networking that you can revert to a known state. This, all in your non-production lab of course. This also brings up some more points. I relate this to some of the updates to the tools that many of us use every day that introduce vulnerabilities into our environments. Don't forget that the complexity of the options in a tool (Wireshark for example), or the complexity and implementation if a standard (Exif, 802.11) all contribute to some potential downfall. That said, I love multi-purpose, extensible tools and standards (Wireshark, Exif and 802.11 included!), but for these complexity reasons, it is important to keep them up to date, and evaluate the implementations. Sometimes this is easier said than done. - Larry "haxorthematrix" Pesce |

| Response: "Is Twitter the newest data security threat?" [HiR Information Report] Posted: 17 Oct 2008 09:37 AM CDT Lori MacVittie posted a compelling piece asking "Is Twitter the newest data security threat?" In my opinion, the answer is "No." It's merely one of tens of thousands of potential avenues of exploitation that can be used intentionally or unintentionally by the real security threat: Those whom you trust to access your data in the first place. Data Loss Prevention suites, Network Access Control, filtering web proxies and other technological solutions are only masking the problem while making it harder for your employees to work efficiently. Michael J. Santarcangelo, II's book, Into The Breach concisely discusses the real problem behind breaches and a sound Strategy to make it better. It takes everything we already acknowledge as security professionals and re-arranges it in a way that makes a lot of sense. In short, security researchers, employers, and journalists need to wake up. Use technology to assist properly-trained employees who are held accountable for their mistakes instead of using technology to restrict clueless employees, and allowing the blame to fall on some software package when things go wrong. When do you start ACTUALLY trusting the people you trust with your data? The issue of customer service via Twitter is a different bag of worms. The decision to use twitter as an enterprise avenue of support is a strategic decision that's better left to marketing, PR and CxO-types. I'd hope they'd analyze the potential impact of making a subset of their customer list public. |

| Posted: 17 Oct 2008 08:46 AM CDT I am reshaping the blog template again, and unlike what I preach, I do the testing directly on the production website. When I where involved in website development and production back in the 1990s, I always had three copies of every site we managed - the production site, a full mirror of the production site for final testing and backup purpose, and a testing site for development. This enabled us to avoid what I am experiencing right now - where some simple and small changes changes the full look and feel of the website. So my advice to you when you mess about with your blog, website, application or network - make sure you have a separate testing environment where you can make and test the changes before you apply them to the production system! Learn by my mistakes! |

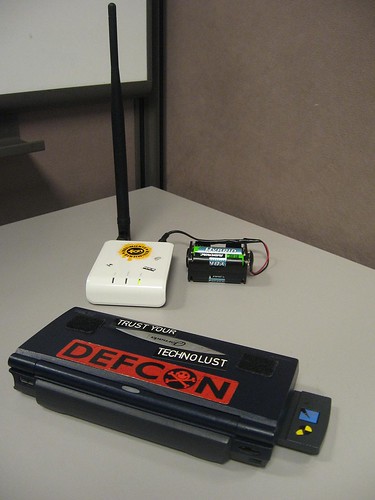

| Friday Geek-Out: WiFi Disruption Edition! [HiR Information Report] Posted: 17 Oct 2008 08:38 AM CDT  If anyone still wants to go to Daily Dose tonight, that's fine. Some of us will be at Casa De Ax0n hacking on wireless stuff. If anyone still wants to go to Daily Dose tonight, that's fine. Some of us will be at Casa De Ax0n hacking on wireless stuff.Given the fact that all things Karma have the potential to disrupt nearby WiFi service, we've opted to take the geek-out away from the normal venue this week. I really don't feel like getting banned from my favorite bar and coffee shop. Like many others before me, I've freed my Fon from the wall outlet with a clip holding 4 AA batteries wired in series for 4.8 Volts. I'm currently using a 6-cell clip with the last two slots bypassed. It will hold five cells just fine for the full 6VDC that it was designed to use. I may do that this evening. Asmodian X and I have been tinkering with these Fon routers for a few days now. We've both bricked (and subsequently un-bricked) them, and they're both running the latest version of OpenWrt. Now it's time to see what we can do with them. The HiR WiFi Lab is going to be in full swing this evening. RSVP if you think you want to show up. I will need to clear it with w1fe 1.0, who probably doesn't want TOO many geeks coming over. |

| IDS: Vitamins Or Prophylactic? [Rational Survivability] Posted: 17 Oct 2008 07:32 AM CDT

Ravi makes a number of interesting comments in his blog titled "IDS/IPS - is it Vitamins?" I'd like to address them because they offer what I maintain is a disturbing perspective on the state and implementation of IDS today by referencing deployment models and technology baselines from about 2001 that don't reflect reality based on my personal experience. Ravi doesn't allow comments for this blog, so I thought I'd respond here. Firstly, I'm not what I would consider and IDS apologist, but I do see the value in being able to monitor and detect things of note as they traverse my network. In order to "prevent" you first must be able to "detect" so the notion one can have one without the other doesn't sound very realistic to me. Honestly, I'd like to understand what commercial stand-alone IDS-only solutions Ravi is referring to. Most IDS functions are built into larger suites of IDP products and include technology such as correlation engines, vulnerability assessment, behavioral anomaly, etc., so calling out IDS as a failure when in reality it represents the basis of many products today is nonsensical. If a customer were to deploy an IPS in-line or out-of-band in "IDS" mode and not turn on automated blocking or all of the detection or prevention capabilities, is it fair to simply generalize that an entire suite of solutions is useless because of how someone chooses to deploy it? I've personally deployed Snort, Sourcefire (with RNA,) ISS, Dragon, TippingPoint, TopLayer and numerous other UTM and IDP toolsets and the detection, visibility, reporting, correlation, alerts, forensic and (gasp!) preventative capabilities these systems offered were in-line with my expectations for deploying them in the first place. IDS can capture tons of intrusion events, there is so much of don't care events it is difficult to single out event such as zero day event in the midst of such noise. Yes, IDS can capture tons of events. You'll notice I didn't say "intrusion events" because in order to quantify an event as an "intrusion," one would obviously have already defined it as such in the IDS, thus proving the efficacy of the product in the first place. This statement is contradictory on face value. The operator's decision to not appropriately "tune" the things he or she is interested in viewing or worse yet not investing in a product that allows one to do so is a "trouble between the headsets" issue and not a generic solution-space one. Trust me when I tell you there are plenty of competent IDS/IPS systems available that make this sort of decision making palatable and easy. To take a note from a friend of mine, the notion of "false positives" in IDS systems seems a little silly. If you're notified that traffic has generated an alert based upon a rule/signature/trigger you defined as noteworthy, how is that a false positive? The product has done just what you asked it to do! Tuning IDS/IPS systems requires context and an understanding of what you're trying to protect, from where, from whom, and why. People looking for self-regulating solutions are going to get exactly what they deserve. Secondarily, if an attacker is actually using an exploit against a zero-day vulnerability, how would *any* system be able to detect it based on the very description of a zero-day? It requires tremendous effort to sift through the log and derive meaningful actions out of the log entries. I again remind the reader that manually sifting through "logs" and "log entries" is a rather outdated concept and sounds more like forensics and log analysis than it does network IDS, especially given today's solutions replete with dashboards, graphical visualization tools, etc. IDS needs a dedicated administrator to manage. An administrator who won't get bored of looking at all the packets and patterns, a truly boring job for a security engineer. Probably this job would interest a geekier person and geeks tend to their own interesting research! I suppose that this sort of activity is not for everyone, but again, this isn't Intrusion Detection, Shadow Style (which I took at SANS 1999 with Northcutt) where we're reading through TCPDUMP traces line by line... There are companies that do without IDS, and they do just fine. I agree with Alan's assessment that IDS is like a Checkbox in most cases. Business can run without IDS just fine, why invest in such a technology? I am convinced that there are many companies that "do without IDS." By that token, there are many companies that can do without firewalls, IPS, UTM, AV, DLP, NAC, etc., until the day they realize they can't or realize that to be even minimally compliant with any sort of regulation and/or "best practice" (a concept I don't personally care for,) they cannot. I'm really, really interested in understanding how it is that these companies "...do just fine." Against what baseline and using what metrics does Ravi establish this claim. If they don't have IDS and don't detect potential "intrusions," then how exactly can it be determined that they are better off (or just as good) without IDS? Firewalls and other devices have built in features of IDS, so why invest in a separate product. So I misunderstood the point here? It's not that IDS is useless, it's just that standalone IDS deployments from 2001 are useless but if they're "good enough" functions bundled with firewalls or "other devices," they have some worth because it costs less? I can't figure out whether the chief complaint here is an efficacy or ill-fated ROI exercise. IDS is like Vitamins, nice to have, not having won't kill you in most cases. Customers are willing to pay for Pain Killers because they have to address their pain right away. For Vitamins, they can wait. Stop and think for moment, without Anti-virus product, businesses can't run for few days. But, without IDS, most businesses can run just fine and I base it out of my own experience. ...and it's interesting to note the difference between chronic and acute pain. In many cases, customers don't notice chronic pain -- it's always there and they adjust their tolerance thresholds accordingly. It may not kill you suddenly, but over time the same might not be true. If you don't ingest vitamins in some form, you will die. When an acute pain strikes, people obviously notice and seek immediate amelioration, but the pain killer option is really just a stop-gap, it doesn't "cure" it simply masks the pain. By this definition it's already too late for "prevention" because the illness has already set in and detection is self-evident -- you're ill. Take your vitamins, however, and perhaps you won't get sick in the first place... The investment strategy in IDS is different than that of AV. You're addressing different problems, so comparing them equliaterally is both unfair and confusing. In the long term, if you don't know what "normal" looks like -- a metric or trending you can certainly gain from IDS/IPS/IDP systems -- how will you determine what "abnormal" looks like? So, sure, just like the vitamin example -- not having IDS may not "kill" you in the short term, but it can certainly grind you down over the long haul. Probably, I would have offended folks from the IDS camp. I have a good friend who is a founder of an IDS company, I am sure he will react differently if he reads my narratives about IDS. Once businesses start realizing that IDS is a Checkbox, they will scale down their investments in this area. In the current economic climate, financial institutions are not doing well. Financial institutions are big customers in terms of security products, with the current scenario of financial meltdown, they would scale down heavily on their spending on Vitamins. Again, if you're suggesting that stand-alone IDS systems are not a smart investment and that the functionality will commoditize into larger suites over time but the FUNCTION is useful an necessary, then we agree. If you're suggesting that IDS is simply not worthwhile, then we're in violent disagreement. Running IDS software on VMware sounds fancy. Technology does not matter unless you can address real world pain and prove the utilitarian value of such a technology. I am really surprised that IDS continues to exist. Proof of existence does not forebode great future. Running IDS on VMware does not make it any more utilitarian. I see a bleak future for IDS. Running IDS in a virtual machine (whether it's on top of Xen, VMware or Hyper-V) isn't all that "fancy" but the need for visibility into virtual environments is great when the virtual networking obfuscates what can be seen from tradiational network and host-based IDS/IPS systems. You see a bleak future for IDS? I see just as many opportunites for the benefits it offers -- it will just be packaged differently as it evolves -- just like it has over the last 5+ years. /Hoff |

| Posted: 17 Oct 2008 07:26 AM CDT Anton is on a plane to California. Thanks to modern technology - scheduled posting - he just posted his take on how not doing application logging. If you are into software development, you might find his insights to very useful. |

| Autumn 2008 Edition of 2600 on Newstands [The IT Security Guy] Posted: 16 Oct 2008 09:34 PM CDT It seemed a bit early, but I happened to see the latest issue of 2600 on the newstand this week, and snapped it up, as I do every three months. As always, there's some good stuff in here. There are articles about Tor, cyberwar, Google Analytics, Blackhat SEO, pen testing and USB forensics.  |

| Will You All Please Shut-Up About Securing THE Cloud...NO SUCH THING... [Rational Survivability] Posted: 16 Oct 2008 04:24 PM CDT

This love affair with abusing the amorphous thing called "THE Cloud" is rapidly approaching meteoric levels of asininity. In an absolute fit of angst I make the following comments:

If you thought virtualization and its attendant buzzwords, issues and spin were egregious, this billowy mass of marketing hysteria is enough to make me...blog ;) C'mon, people. Don't give into the generalist hype. Cloud computing is real. "THE Cloud?" Not so much. /Hoff (I don't know what it was about this article that just set this little rant off, but well done Mr. Moyle) |

| Insiders dodge security for productivity, RSA says [Telecom,Security & P2P] Posted: 16 Oct 2008 09:44 AM CDT In a recent survey by RSA, a fact was discovered that insiders dodge security for productivity. I agree that it’s very common at a company that workers and employees share a computer or share some accounts. It might be a not-bad compromise for a non-critical and non-sensitive IT environment in order to cost saving. Anyway, in most cases, it violate best practice and should be corrected. |

| [Chinese]clickjacking攻击 [Telecom,Security & P2P] Posted: 16 Oct 2008 09:16 AM CDT SecurityFocus报道了一种新型的基于web的攻击方式 - clickjacking。简单说是一种通过web显示与用户实际看到的内容不一致的浏览器缺陷,来引导用户点击或者输入攻击者想要的动作或内容。像按钮、图像、表单、链接等都可能被用来实施这种攻击。通过巧妙地设计,攻击者可以通过点击劫持,可以操控被害者的摄像头和麦克风。并且根据报道,当前的集中主流的浏览器,像IE, Chrome, Safari, Opera等都不能幸免。而当前Firefox3.0上的一个插件 - NoScript可以帮助保护避免这种攻击。 发明者Hansen and Grossman给这种攻击方式取了这个名字Clickjacking - 点击劫持,也很形象,一种欺骗性的hijacking。它比简单的基于域名欺骗的网络钓鱼更有隐蔽性和欺骗性。 Google到毒霸博客上有一篇很好的报道。下面是一些摘录:

|

| Job Opportunity of Server Architect [Telecom,Security & P2P] Posted: 16 Oct 2008 01:50 AM CDT There is a good job opportunity in our organization. If you are interested or have friends to recommend, please don’t hasitate to contact me by sending the CV/resume to my email address (richard.zhaol at gmail dot com) Job Discription: This is a senior technical position of Global Infrastructure Department, under CIO organizations. This is an individual contributor, direct report to Director of Architect and Security Operations. 1. Lead the global roadmap and technology innovations related to server, storage, virtualization. Requirements: 1. Bachelor degree majored in Computer Sciences or Electrical Engineering with very good academic performances (Master/PhD a plus) |

| Links for 2008-10-15 [del.icio.us] [HiR Information Report] Posted: 16 Oct 2008 12:00 AM CDT

|

| Links for 2008-10-15 [del.icio.us] [Sicurezza Informatica Made in Italy] Posted: 16 Oct 2008 12:00 AM CDT |

| ROSI : Quando conviene investire in sicurezza informatica [Sicurezza Informatica Made in Italy] Posted: 15 Oct 2008 05:36 PM CDT Dopo un bel po di assenza causa lavoro e progetti vari torniamo all'argomento investimenti in ambito di sicurezza informatica. Nel post precedente ho cercato di fornire un idea sugli step da seguire prima di arrivare a delineare un budget di spesa per la sicurezza informatica. Un consulente di sicurezza informatica può aiutare a definire gli obiettivi e le priorità da perseguire per non vanificare l'efficacia dei budget di spesa, che coi tempi che corrono sono sempre più ristretti. In questo post vorrei dare il mio contributo alla discussione sul ROI e ROSI in ambito sicurezza e chiarire molti dei "misbelief" che purtroppo dominano il settore. La prima domanda da porsi è: ha senso parlare di ROI per la sicurezza? Se si vuole essere economisti puri, certamente no. Il ROI presuppone un Return, ricavo dall'investimento, che chiaramente non esiste per spese in favore di maggiore sicurezza e protezione. Per chiarire ogni dubbio: Mentre in generale il ROI presuppone un guadagno a fronte di una costo, il ROSI presuppone un risparmio a fronte di un costo. Si affronta un investimento in sicurezza (costo) per evitare una perdita risultante da un attacco con successo agli asset aziendali. Cost avoidance. Il ROSI è positivo quando il costo è minore della perdita. Questo stravolgimento di concetti è ciò che ha confuso molti addetti del settore e scoraggiato molti manager ancorati ai dogmi dell'economia pre-informatica. Fin qui sembrerebbe solo un problema di cambio di mentalità per cui come nell'evoluzione della specie, chi sa adattarsi ai cambiamenti sopravvive, gli altri soccombono. Non è così semplice purtroppo. Prima di capire perché, bisogna avere davanti agli occhi le formule coinvolte nel calcolo del ROSI: Per singolo rischio SLE = AV * EF SLE= Single Loss Expectancy (Perdita per singolo rischio) AV = Asset value (valore economico dell'asset da proteggere) EF = Exposure Factor (probabilità che il rischio diventi reale) L'investimento è il costo da sostenere per ridurre di un certo livello percentuale l'EF connesso al rischio considerato. Questo include la supposizione, corretta a mio parere, che nessuna spesa in termini di sicurezza può veramente ridurre a 0 l'EF, cioè la probabilità che l'attacco si realizzi con successo. L'investimento in sicurezza è volto a diminuire il più possibile il SLE diminuendo l'EF. Un esempio pratico chiarisce il concetto: Una risorsa (asset) il cui valore ammonta a 1Mln di euro, ha una probabilità di essere compromessa pari al 10% (EF). Come si può notare dalla precedente formula i problemi del calcolo del ROSI sono molteplici:

Nel precedente esempio, lo SLE include costi che fanno capo alle seguenti voci:

Alla luce delle precedenti osservazioni ha ancora senso parlare di ROSI? Il ROSI non compare mai in documenti finanziari ma può essere utile per decidere la fattibilità e la convenienza dell'investimento in sicurezza qualunque sia la dimensione dell'organizzazione. Chi assegna i valori nella formula dello SLE? A dare un valore alla variabile AV è solitamente il livello esecutivo che meglio di ogni altro conosce il valore economico di ogni asset aziendale. Una corretta gestione della variabile EF è compito esclusivo di un esperto di sicurezza che deve essere a conoscenza dello stato della sicurezza pre-investimento e deve essere in grado di valutare il livello di incidenza di ogni minaccia. Nei prossimi post voglio espandere il concetto di ROI applicato all sicurezza informatica. Non è vero che non si può guadagnare da un investimento in sicurezza. Alla prossima  |

| [Chinese]俄国研究人员破解WPA2提速100倍 [Telecom,Security & P2P] Posted: 15 Oct 2008 09:24 AM CDT 俄国公司ElcomSoft Co. Ltd研究成功使用nVidia视频卡GPU破解WPA/WPA2提速100倍。这个报道引起了很多安全人士的兴趣。 之前,由于WEP的安全问题,很多公司和安全标准都对WiFi网络进行了升级,建议使用WPA/WPA2,包括PCI-DSS。虽然,使用更多的计算资源和定制的优化算法对加密算法破解并不是新闻,可是针对WPA/WPA2的攻击、以及利用较为廉价的图像处理芯片来达成这一目的,相当于使的破解成本答复下降。 这些破解技术的发展使用单纯使用WPA/WPA2也不再是安全的,而是根据安全需要使用更为复杂的密码,或者使用更高级的认证方法. |

| You are subscribed to email updates from Security Bloggers Network To stop receiving these emails, you may unsubscribe now. | Email Delivery powered by FeedBurner |

| Inbox too full? | |

| If you prefer to unsubscribe via postal mail, write to: Security Bloggers Network, c/o FeedBurner, 20 W Kinzie, 9th Floor, Chicago IL USA 60610 | |

vulnerability in libexif, the basis to several other tools I've been looking at as well. There is no know vulnerability, but from the description, it seems fairly trivial to create a corrupt file to trigger the condition.

vulnerability in libexif, the basis to several other tools I've been looking at as well. There is no know vulnerability, but from the description, it seems fairly trivial to create a corrupt file to trigger the condition.

No comments:

Post a Comment