Spliced feed for Security Bloggers Network |

| Rothman does the Wave - my thoughts exactly! [StillSecure, After All These Years] Posted: 30 Sep 2008 07:30 AM CDT From Mike's Security Incite yesterday:

'nuff said. |

| Regulations, Risk and the Meltdown [Emergent Chaos] Posted: 30 Sep 2008 05:18 AM CDT There are obviously a large set of political questions around the 700+ billion dollars of distressed assets Uncle Sam plans to hold. If you care about the politics, you're already following in more detail than I'm going to bother providing. I do think that we need to act to stem the crisis, and that we should bang out the best deal we can before the rest of the banks in the US come falling like dominos. As Bagehot said, no bank can withstand a crisis of confidence in its ability to settle. I think that knowing how distasteful and expensive it is, and with far better things to do with the $5,000 or so it will personally cost me as a taxpayer. (That $2,300 figure is per person.) I also think that knowing how poorly this administration has done in handling crisis from 9/11 to Katrina, and how poorly it does when forced to act in a moment of crisis. (Sandy Levinson has some interesting comments at "A further Schmittian (and constitutional?) moment.") Finally, we are not bailing out the banks at the cost of a free market in banking. We gave up on a free market in banking in 1913 or so, after J.P. Morgan (not his eponymous bank) intervened to fix the crises of 1895 and 1907. What I did want to look at was the phrase "more regulation," and relate it a little to information security and risk management. US banks are already intensely regulated under an alphabet soup of laws like SOX, GLB, USA PATRIOT and BSA. They're subject to a slew of additional contractual obligations under things like PCI-DSS and BASEL rules on capital. And that's leaving out the operational sand which goes by the name AML. In fact, the alphabet soup has gotten so thick that there's an acronym for the acronyms: GRC, or Governance, Risk and Compliance. Note that two of those three aren't about security at all: they're about process and laws. In the executive suite, it makes perfect sense to start security with those governance and compliance risks which put the firm or its leaders at risk. There's only so much budget for such things. After all, every dollar you spend on GRC and security is one that you don't return to your shareholders or take home as a bonus. And measuring the value of that spending is notoriously hard, because we don't share data about what happens. Just saying that measurement is hard is easy. It's a cop out. I have (macro-scale) evidence as to how well it all works:

There's obviously immediate staunching to be done, but as we come out of that phase and start thinking about what regulatory framework to build, we need to think about how to align the interests of bankers and society. If you'd like more on these aspects, I enjoyed Bob Blakley's "Wall Street's Governance and Risk Management Crisis" and Nick Leeson, "The Escape of the Bankrupt" (via Not Bad for a Cubicle. Thurston points out the irony of being lectured by Nick "Wanna buy Barings?" Leeson.) I'm not representing my co-author Andrew in any of this, but at least as I write this, his institution remains solvent. |

| (IN)SECURE Magazine Issue 18 [EnableSecurity] Posted: 29 Sep 2008 10:40 PM CDT

This issue also includes my column which talks about why the latest happenings in the security industry should shake us to our senses. The idea is that we need to realize that some of the Internet technologies that we rely on have fundamental flaws. Here’s a download link.  |

| Posted: 29 Sep 2008 02:08 PM CDT A couple of months ago we started sorting out through all our work. In the processes we realized that we have to find a new home for several of our project. It was a tough decision because we had a lot of projects on our hands and there were even more pending to be completed in some fashion. Nevertheless, we decided to go with the plan. So, the idea of Secapps was born. So what is Secapps? Secapps is the new home of our GHDB tool. It will also be the new home for several other tools and perhaps an user-supported index of external tools regardless whether they are online or offline. Secapps can also be a free hosting environment for your application. Seriously, if you have an application that we like, we can host it there for you. It will be part of Secapp’s application stack and as such sponsored by GNUCITIZEN. I can see a few positive ways Secapps can develop in the future. For now, it will simply host our online security tools. If you have any suggestions or recommendations, do not hesitate to contact us. |

| Economic crisis makes for strange bedfellows [StillSecure, After All These Years] Posted: 29 Sep 2008 01:53 PM CDT Last week when McAfee bought Secure Computing Pete Lindstrom did a nice job of detailing comings and goings and incestuous relationships at play. With the announcement today of Citgroup buying up the banking assets of Wachovia at distressed prices, I reflected on some of the changes that have gone down recently. It makes the connections in the security deals pale by comparison. Citgroup which in and of itself is the result of many mergers including Travelers Group and Smith-Barney among others, is buying Wachovia which took a piece of the Rock from Prudential and also JD Edwards and how many others. Last week the other bank we use WaMu was taken out by JPMorgan Chase. WaMu had taken over Providian, where we had some credit cards. I still remember when Chase swallowed Chemical Bank, which in turn had taken Manufacturers Hanover over. Then of course Chase also took out Bank One. Bank of America which took over another bank I used to use Fleet, now owns Merrill Lynch, which themselves had bought so many other firms. Here we are left with in essence, three banks in this country. If the bailout ever gets done, the US Government may own a significant chunk of them. Similar to the evolutionary theory on why dinosaurs got so big, they are filling the vacuum in the market and bulking up to survive in this new changing finance world. My only comment is - you know what happened to the dinosaurs, right? Also to all of these people spouting free markets and let the chips fall where they may, it seems like fiddling while Rome burns. The collapse of our financial system is gong to be bad news for all of us!

Related articles by Zemanta |

| Podcast #58 - Bill Brenner, CSO Online [The Converging Network] Posted: 29 Sep 2008 12:44 PM CDT

Alan and I also talk about a host of items including the every evolving M&A activity in the security industry, Apple's wonderful blackbox "we know better" iPhone (which wiped out all of Alan's music during a recent upgrade), and "green IT" press releases by Mirage Networks and others. Enjoy the podcast. If you are interested in sponsoring the podcast, feel free to contact us. This posting includes an audio/video/photo media file: Download Now |

| SecuraBit Episode 11 [SecuraBit] Posted: 29 Sep 2008 10:37 AM CDT This week Anthony Gartner & Rob Fuller discuss the latest computer security news. Special guests are Vyrus and CP from the dc949.org group. Episode 11 Discussions covered the following topics: Skynet, Advanced Dork, Google Site Indexer, These tools work worked on by CP and Vyrus and the dc949 group and are written as open source. Rob brought up a [...] This posting includes an audio/video/photo media file: Download Now |

| OWASP NYC AppSec Recap [The Security Shoggoth] Posted: 29 Sep 2008 10:01 AM CDT The OWASP NYC AppSec conference was this past week and I was lucky enough to be one of the speakers there. Overall, the conference was great and OWASP did a tremendous job doing everything they could to make the conference go as smoothly as possible. The organizers should be commended for the job they did. In the opening keynote, the organizers stated that this was the largest web app security conference in the world and I could see why. I believe there were over 800 people at the conference and every talk I went to was packed. While I went to many talks, there are a few that really stood out. They are: Malspam - Garth Bruen, knujon.com - Garth talked about what knujon has been able to accomplish over the last few months and its been quite impressive. He has been gathering alot of data on illicit networks and has found a clear link between porn, drugs and malware on the Internet. He gave one example of where an illegal pharma site was shut down and two days later it was serving up porn and malware. Security Assessing Java RMI - Adam Boulton, Corsaire - This was an excellent talk on how to assess the Java Remote Method Invocation (RMI) APIs/tools/whatever from Sun. Basically, RMI is a distributed computing API for Java and has been part of the core JDK since 1.1 (java.rmi package). Its analogous to .NET, RPC or CORBA. Adam went over some methods for attacking RMI apps and previewed a tool of his named "RMI Spy" which (I believe) he'll be releasing. Flash Parameter Injection - Ayal Yogev & Adi Sharabani, IBM - This talk was about how to inject your own data into flash applications, the result being XSS, XSRF, or anything you can think of to attack the client. Basically, Flash applications have global variables which can be assigned as parameters when loading the flash movie in a web page. If the global variables are not initialized properly (and they usually aren't) then attackers can load their own flash apps and own the client. APPSEC Red/Tiger Team Projects, Chris Nickerson - The next talk was probably one of the best I attended at the conference. Chris Nickerson was one of the guys on the ill-fated Tiger Team show and is a really cool guy - I talked to him for some time at the OWASP party the night before. He stated in his talk that pen testing applications does not show how a "real world attack" would happen. By performing a red/tiger team approach to an application test, you are able to show the client how an attack would occur and how their app would be broken into. In other words, if someone wants the data in an app they're not just going to bang on it from the Internet - they're going to go to the client site and try to get information from there through various methods. Of course, those are brief descriptions of the talks. The conference will be releasing all talks on video so I recommend watching the videos - they will be worth it. |

| Patent Application for Aurora Vulnerability Fix [Digital Bond] Posted: 29 Sep 2008 10:00 AM CDT How would you feel if Core Security, KF, Eyal, Neutralbit … or Digital Bond … found a vulnerability in an important critical infrastructure component; created a sensational video demonstration of the impact / consequences that was picked up by CNN and the rest of the media; and then patented and licensed what we claimed to be THE solution to the vulnerability? A patent application for an Aurora vulnerability mitigation was published last week, originally filed on March 20, 2007. It was submitted by INL/Battelle Energy Alliance. It is reasonable to assume this was the technology licensed to Coopers and referenced in a few articles and significant scuttlebutt that claimed others were not adopting the ‘fix’. This is not meant as a slam of the patent holders. Rather it is hopefully a realpolitik wake up call to the community that everyone involved in the vulnerability disclosure issue: researchers, vendors, asset owners, universities, national labs, congress, executive branch agencies, magazines/media and yes, even consultants address vulnerability disclosure at least partially through self interest. No one is pure. Let’s wake up and realize that vulnerability disclosure is always going to be contentious and can’t be contained. Let’s place the emphasis on improving security engineering to reduce the number of vulns and the response to quickly and professionally address identified vulns. At least in this case a solution for the vuln, albeit hugely hyped and albeit for pay, was provided. |

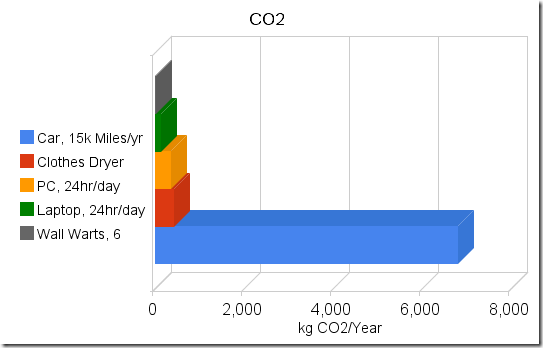

| Unplug Your Wall Warts and Save the Planet? [Last In - First Out] Posted: 29 Sep 2008 09:07 AM CDT Do wall warts matter?  (09/29-2008 - Updated to correct minor grammatical errors. ) Let's try something unique. I'll use actual data to see if we can save the planet by unplugging wall transformers. Step one – Measure wall wart power utilization. Remember that Volts x Amps = Watts, and Watts are what we care about. Your power company charges you for kilowatt-hours. (One thousand watts for one hour is a kWh).  Start with one clamp-on AC ammeter, one line splitter with a 10x loop (the meter measures 10x actual current)and one wall wart (a standard Nokia charger for an N800). Start with one clamp-on AC ammeter, one line splitter with a 10x loop (the meter measures 10x actual current)and one wall wart (a standard Nokia charger for an N800).And we have zero amps on the meter. OK - That meter is made for measuring big things, so maybe I need a different meter. Lesson oneWall warts don't draw much current. They don't show up on the ammeters' scale even when amplified by a factor of 10.Try again - this time with an in-line multimeter with a 300mA range.  Children - don't try this at home - unless you are holding on to your kid brother and he is properly grounded to a water pipe. (just kidding.....) That's better. It looks like we have a couple milliamps current draw. Try a few more. The ones I have (Motorola, Samsung, Nokia) are all pretty close to the same. Lets use a high estimate of 5mA @ 120v, or about a half of a watt. Similarity, checking various other parasitic transformers, like notebook computer power bricks, yields currents in the low milliamp ranges. When converted to watts, the power draw for each brick is somewhere between one-half and two watts. To make sure the numbers are rational and that I didn't make a major error somewhere, I did the simplest check of all. I placed my hand on the power bricks. When they are plugged into the wall and nothing is plugged into them, they are not warm. (Warm = watts). One more sanity check. Plugging three notebook power supplies and three phone power supplies into a power strip shows about 30mA @ 120v for all six bricks, which is under 4 watts, or less than a watt each. My measurement are rough (I don't have a proper milliamp meter), but for estimates for a blog that nobody actually reads, they should be close enough. So lets pretend that I want to save the planet, and that unplugging power bricks is the way I'm going to do it. I'll need to periodically plug them in to charge whatever they are supposed to charge. Lets assume they'll be plugged in for 4 hours per day and unplugged 20 hours per day. If I have a half dozen power bricks, I'll save around 5 watts x 20 hours = 100 watt-hours per day, or the amount of electricity that one bright light bulb uses in one hour. That would add up to 35kWh (Kilowatt-hours) per year. Not bad, right? Until you put it into perspective. PerspectiveLet's take the other end of the home appliance spectrum. The clothes dryer (clothes tumbler to those on the damp side of the pond). That one is a bit harder to measure. The easiest way is to open up the circuit breaker box and locate the wires that go to the dryer. Hooking up to the fat red wire while the dryer is running shows a draw of about 24 amps @ 220 volts. I did a bit of poking around (Zzzztttt! Oucha!!.....Damn...!!!) and figured out that the dryer, when running on warm (verses hot or cold) uses about 20 amps for the heating element and about 4 amps for the motor. The motor runs continuously for about an hour per load. The heating element runs at about a 50% duty cycle for the hour that the dryer is running on medium heat. Assume that we dry a handful of loads per week and that one load takes one hour. If the motor runs 4 hours/week and the heating element runs half the time, or two hours per week, we'll use about a dozen kWh per week, or about 600 kWh per year. That's about the same as 100 wall warts. How about doing one less load of clothes in the dryer each week? You can still buy clothes lines at the hardware store - they are over in the corner by the rest of the End-of-Life merchandise, and each time that you don't use your clothes dryer, you'll save at least as much power as a wall wart will use for a whole year. Lets do another quick check. Lets say that I have a small computer that I leave run 24 hours per day. Mine (an old SunBlade 150 that I use as a chat and file server) uses about 60 watts when powered up but not doing anything. That's about 1.4 kWh per day or about 500kWh per year, roughly the same as my clothes dryer example and roughly the same as 100 wall warts. Anyone with a gaming computer is probably using twice as much power. So how about swapping it out for a lower powered home server? Notebooks, when idling with the screen off, seem to draw somewhere between 15 and 25 watts. (Or at least the three that I have here at home are in that range). That's about half of what a low end PC draws and about the same as 25 wall warts. So using a notebook as your home server will save you (and the planet) far more that a handful of wall warts. And better yet, the difference between a dimly lit notebook screen and a brightly lit one is about 5 watts. Yep - that's right, Dimming your screen saves more energy than unplugging a wall wart. Make this easier!How about a quick and dirty way of figuring out what to turn off without spending a whole Sunday with ammeters and spreadsheets? It's not hard.If it is warm, it is using power. The warmer it is, the more power it is using. (Your laptop is warm when it is running and cold when it is shut off, right?). And if you can grab onto it without getting a hot hand, like you can do with a wall wart, (and like you can't do with an incandescent light bulb) it isn't using enough electricity to bother with. The CO2So why do we care? Oh yeah - that global warming thing. Assuming that it's all about the CO2, we could throw a few more bits into the equation. Using the CO2 calculator at the National Energy Foundation in the UK and some random US Dept of Energy data, and converting wall warts to CO2 at a rate of 6kWh per wall wart per year and 1.5lbs of CO2 per kWh, it looks like you'll generate somewhere around 4kg of CO2 per year for each wall wart, +/- a kg or two, depending on how your electricity was generated.Compare that to something interesting, like driving your car. According to the above NEF calculator and other sources, you'll use somewhere around a wal-wart-years worth of CO2 every few miles of driving. (NEF and Sightline show roughly 1kg of CO2 every two miles of driving). So on my vacation this summer I drove 6000 miles and probably used something like 3000kg of CO2. That's about 700 wall-wart-year equivalents (+/- a couple hundred wwy's). Take a look at a picture. (Or rather....take a look at a cheezy Google chart with the axis labels in the wrong order....) Can you see where the problem might be? (Hint - It's the long bright blue bar) Obviously my numbers are nothing more than rough estimates. But they should be adequate to demonstrate that if you care about energy or CO2, wall warts are not the problem and unplugging them is not the solution. Should you unplug your wall warts?You can do way better than that!Disclaimer: No wall warts were harmed in the making of this blog post. Total energy consumed during the making of the post: 5 - 23 watt CFL bulbs for 2 hours = 230 watt-hours; 5 - 25 watt incandescent bulbs for 1/2 hour = 62.5 watt-hours; one 18 watt notebook computer for 3 hours = 54 watt-hours; one 23 watt notebook for 3 hours = 69 watt-hours; Total of 415 watt-hours, or 28.8 wall-wart-days. Any relationship between numbers in this blog post and equivalent numbers in the real world is coincidental. See packaging for details. |

| The Path of Least Resistance Isn't [Last In - First Out] Posted: 29 Sep 2008 09:07 AM CDT 09/29-2008 - Updated to correct minor grammatical errors. When taking a long term view of system management

As system managers, we are often faced with having to trade off short term tangible results against long term security, efficiency and stability. Unfortunately when we take the path of least resistance and minimize near term work effort, we often are left with systems that will require future work effort to avoid or recover from performance, security and stability problems. In general, when we short cut the short term, we are creating future work effort that ends up costing more time and money than we gained with the short term savings. Examples of this are:

In the world of car collectors, a similar concept is called Deferred Maintenance. Old cars cost money to maintain. Some owners keep up with the maintenance, making the commitments and spending the money necessary to keep the vehicles well maintained. They 'pay as they go'. Other owners perform minimal maintenance, only fixing what is obviously broke, leaving critical preventative or proactive tasks undone. So which old car would you want to buy? In the long run, the car owners who defer maintenance are not saving money, they are only deferring the expense of the maintenance until a future date. This may be significant, even to the point where the purchase price of the car is insignificant compared to the cost of bringing the maintenance up to date. And of course people who buy old collector cars know that the true cost of an old car is the cost of purchasing the car plus the cost of catching up on any deferred maintenance, so they discount the purchase price to compensate for the deferred maintenance. In system and network administration, deferred maintenance takes the form of unhardened, unpatched systems; non-standard application installations, adhoc system management, missing or inaccurate documentation, etc. Those sort of shortcuts save time in the short run, but end up costing time in the future. We often decide to short cut the short term work effort, and sometimes that's OK. But when we do, we need to make the decision with the understanding that whatever we saved today we will pay for in the future. Having had the unfortunate privilege of inheriting systems with person-years of deferred maintenance and the resulting stability and security issues, I can attest to the person-cost of doing it right the second time. |

| Privacy, Centralization and Security Cameras [Last In - First Out] Posted: 29 Sep 2008 09:07 AM CDT 09/29-2008 - Updated to correct minor grammatical errors. The hosting of the Republican National Convention here in St Paul has one interesting side effect. We finally have our various security and traffic cameras linked together: http://www.twincities.com/ci_10339532 "The screens will also show feeds from security cameras controlled by the State Patrol, Minnesota Department of Transportation, and St. Paul, Minneapolis and Metro Transit police departments.So now we have state and local traffic cameras, transit cameras and various police cameras all interconnected and viewable from a central place. This alone is inconsequential. When however, a minor thing like this is repeated many times across a broad range of places and technologies and over a long period of time, the sum of the actions are significant. In this case, what's needed to turn this into something significant is a database to store the surveillance images and a way of connecting the If it did, J Edgar Hoover would be proud. The little bits a pieces that we are building to solve daily security and efficiency 'problems' are building the foundation of a system that will permit our government to efficiently track anyone, anywhere, anytime. Hoover tried, but his index card system wasn't quite up to the task. He didn't have Moores' Law on his side. As one of my colleagues indicates, hyper-efficient government is not necessarily a good thing. Institutional inefficiency has some positive properties. In the particular case of the USA there are many small overlapping and uncoordinated units of local, state and federal government and law enforcement. In many cases, these units don't cooperate with each other and don't even particularly like each other. There is an obvious inefficiency to this arrangement. But is that a bad thing? Do we really want our government and police to function as a coordinated, efficient, centralized organization? Or is governmental inefficiency essential to the maintenance of a free society? Would we rather have a society where the efficiency and intrusiveness of the government is such that it is not possible to freely associate or freely communicate with the subversive elements of society? A society where all movements of all people are tracked all the time? Is it possible to have an efficient, centralized government and still have adequate safeguards against the use of centralized information by future governments that are hostile to the citizens? As I wrote in Privacy, Centralization and Databases last April: What's even more chilling is that the use of organized, automated data indexing and storage for nefarious purposes has an extraordinary precedent. Edwin Black has concluded that the efficiency of Hollerith punch cards and tabulating machines made possible the extremely "...efficient asset confiscation, ghettoization, deportation, enslaved labor, and, ultimately, annihilation..." of a large group of people that a particular political party found to be undesirable.We are giving good guys full spectrum surveillance capability so that some time in the future when they decide to be bad guys, they'll be efficient bad guys. There have always been bad governments. There always will be bad governments. We just don't know when. |

| Scaling Online Learning - 14 Million Pages Per Day [Last In - First Out] Posted: 29 Sep 2008 09:06 AM CDT Some notes on scaling a large online learning application. 09/29-2008 - Updated to correct minor grammatical errors. Stats:

Design. Early design decisions by the vendor have been both blessings and curses. The application is not designed for horizontal scalability at the database tier. Many normal scaling options are therefore unavailable. Database scalability is currently limited to adding cores and memory to the server, and adding cores and memory doesn't scale real well. The user session state is stored in the database. The original version of the application made as many as ten database round trips per web page, shifting significant load back to the database. Later versions cached a significant fraction of the session state, reducing database load. The current version has stateless application servers that also cache session state, so database load is reduced by caching, but load balancing decisions can be still made without worrying about user session stickiness. (Best of both worlds. Very cool.) Load Curve.  The load curve peaks early in the semester after long periods of low load between semesters. Semester start has a very steep ramp up, with first day load as much as 10 times the load the day before (See chart). This reduces opportunity for tuning under moderate load. The app must be tuned under low load. Assumptions and extrapolations are used to predict performance at semester startup. There is no margin for error. The app goes from idle to peak load in about 3 hours on the morning of the first day of classes. Growth tends to be 30-50% per semester, so peak load is roughly predicted at 30-50% above last semester peak. The load curve peaks early in the semester after long periods of low load between semesters. Semester start has a very steep ramp up, with first day load as much as 10 times the load the day before (See chart). This reduces opportunity for tuning under moderate load. The app must be tuned under low load. Assumptions and extrapolations are used to predict performance at semester startup. There is no margin for error. The app goes from idle to peak load in about 3 hours on the morning of the first day of classes. Growth tends to be 30-50% per semester, so peak load is roughly predicted at 30-50% above last semester peak.Early ProblemsUnanticipated growth. We did not anticipate the number of courses offered by faculty the first semester. Hardware mitigated some of the problem. The database server grew from 4CPU/4GB RAM to 4CPU/24GB, then 8CPU/32GB in 7 weeks. App servers went from four to six to nine.Database fundamentals: I/O, Memory, and problems like 'don't let the database engine use so much memory that the OS gets swapped to disk' were not addressed early enough. Poor monitoring tools. If you can't see deep in to the application, operating system and database, you can't solve problems. Poor management decisions. Among other things, the project was not allowed to draw on existing DBA resources, so non-DBA staff were forced to a very steep database learning curve. Better take the book home tonight, 'cause tomorrow you're gonna be the DBA. Additionally, options for restricting growth by slowing the adoption rate of the new platform were declined, and critical hosting decisions were deferred or not made at all. Unrealistic Budgeting. The initial budget was also very constrained. The vendor said 'You can get the hardware for this project for N dollars'. Unfortunately N had one too few zero's on the end of it. Upper management compared the vendor's N with our estimate of N * 10. We ended up compromising at N * 3 dollars, having that hardware only last a month & within a year and a half spending N * 10 anyway. Application bugs. We didn't expect tempDB to grow to 5 times the size of the production database and we didn't expect tempDB to be busier than the production database. We know from experience that SQL Server 2000 can handle 200 database logins/logouts per second. But just because it can, doesn't mean it should. (The application broke its connection pooling.) 32 bits. We really were way beyond what could rationally be done with 32 bit operating systems and databases, but the application vendor would not support Query Tuning and uneven index/key distribution. We had parts of the database were the cardinality looked like a classic long tail problem, making query tuning and optimization difficult. We often had to make a choice of optimizing for one end of the key distribution or the other, with performance problems at whatever end we didn't optimize. Application Vendor Denial. It took a long time and lots of data to convince the app vendor that not all of the problems were the customer. Lots of e-mail, sometimes rather rude, was exchanged. As time went on, they started to accept our analysis of problems, and as of today, are very good to work with. Redundancy. We saved money by not making the original file and database server clustered. That cost us in availability. Later ProblemsMoore's Law. Our requirements have tended to be ahead of where the hardware vendors were with easily implementable x86 servers. Moore's Law couldn't quite keep up to our growth rate. Scaling x86 SQL server past 8 CPU's in 2004 was hard. In 2005 there were not very many options for 16 processor x86 servers. Scaling to 32 cores in 2006 was not any easier. Scaling to 32 cores on a 32 bit x86 operating system was beyond painful. IBM's x460 (x3950) was one of the few choices available, and it was a painfully immature hardware platform at the time that we bought it.The "It Works" Effect. User load tended to ramp up quickly after a smooth, trouble free semester. The semester after a smooth semester tended to expose new problems as load increased. Faculty apparently wanted to use the application but were held back by real or perceived performance problems. When the problems went away for a while they jumped on board, and the next semester hit new scalability limits. Poor App Design. We had a significant number of high volume queries that required re-parsing and re-optimization on each invocation. Several of the most frequently called queries were not parameterizable and hence had to be parsed each time they were called. At times we were parsing hundreds of new queries per second, using valuable CPU resources on parsing and optimizing queries that would likely never get called again. We spent person-months digging onto query optimization and building a toolkit to help dissect the problem. Database bugs. Page latches killed us. Tier 3 database vendor support, complete with a 13 hour phone call and Gigs of data finally resolved a years old (but rarely occurring) latch wait state problem, and also uncovered a database engine bug that only showed up under a particularly odd set of circumstances (ours, of course). And did you know that when 32-bit SQL server sees more than 64GB of RAM it rolls over and dies? We didn't. Neither did Microsoft. We eventually figured it out after about 6 hours on the phone with IBM Advanced Support, MS operating system tier 3 and MSSQL database tier 3 all scratching their heads. /BURNMEM to the rescue. High End Hardware Headaches. We ended up moving from a 4 way HP DL580 to an 8-way HP DL740 to 16-way IBM x460's (and then to 32 core x3950's). The x460's and x3950's ended up being a maintenance headache, beyond anything that I could have imagined. We hit motherboard firmware bugs, disk controller bugs, had bogus CPU overtemp alarms, hardware problems (bad voltage regulators on chassis interface boards), and even ended up with an IBM 'Top Gun' on site (That's her title. And no, there is no contact info on her business card. Just 'Top Gun'.) File system management. Maintaining file systems with tens of millions of files is a pain, no matter how you slice it. Things that went right.We bought good load balancers right away. The Netscalers have performed nearly flawlessly for 4 years, dishing out a thousand pages per second of proxied, content switched, SSL'd and Gzip'd content.The application server layer scales out horizontally quite easily. The combination of proxied load balancing, content switching and stateless application servers allows tremendous flexibility at the app server layer. We eventually built very detailed database statistics and reporting engine, similar to Oracle AWR reports. We know, for example, what the top N queries are for CPU, logical I/O, physical I/O. etc, at ten minute intervals any time during the last 90 days. HP Openview Storage Mirroring (Doubletake) works pretty well. It's keeping 20 million files in sync across a WAN with not too many headaches. We had a few people who dedicated person-years of their life to the project, literally sleeping next to their laptops, going for years without ever being more than arms reach from the project. And they don't get stock options. I ended up with a couple quotable phrases to my credit. On Windows 2003 and SQL server: "It doesn't suck as bad as I thought it would"and "It's displayed an unexpected level of robustness" Lessons:Details matter. Enough said.Horizontal beats vertical. We know that. So does everyone else in the world. Except perhaps our application vendor. The application is still not horizontally scalable at the database tier and the database vendor still doesn't provide a RAC like horizontally scalable option. Shards are not an option. That will limit future scalability. Monitoring matters. Knowing what to monitor and when to monitor it is essential to both proactive and reactive application and database support. AWR-like reports matter. We have consistently decreased the per-unit load on the back end database by continuously pounding down the top 'N' queries and stored procedures. The application vendor gets a steady supply of data from us. They roll tweaks and fixes from their customers into their normal maintenance release cycle. It took a few years, but they really do look at their customers' performance data and develop fixes. We fed the vendor data all summer. They responded with maintenance releases, hot fixes and patches that reduced database load by at least 30%. Other vendors take note. Please. Vendor support matters. We had an application that had 100,000 users, and we were using per-incident support for the database and operating system rather than premier support. That didn't work. But it did let us make a somewhat amusing joke at the expense of some poor first tier help desk person. Don't be the largest installation. You'll be the load test site. Related Posts:The Quarter Million Dollar QueryUnlimited Resources Naked Without Strip Charts [1] For our purposes, a page is a URL with active content that connects to the database and has at least some business logic. |

| ISS 2 years after [StillSecure, After All These Years] Posted: 29 Sep 2008 08:27 AM CDT Niel Roiter over at Techtarget has a good article up on what has become of ISS as it approaches 2 years under the rule of Big Blue. Of course Mitchell and I had Tom Noonan on just a few weeks ago and as we spoke about, Tom is no longer at IBM/ISS. At the time of the ISS acquistion, speculation was rampant over whether IBM would continue the ISS product line or instead concentrate on the services side of the ISS business, which represented the majority of the revenue actually. Coinciding with the 2 year anniversary, IBM/ISS actually released a slew of new/updated products:

So at first blush it seems that ISS/IBM is still very much concentrated on products. It took 2 years to find their way within the IBM universe but are getting back to business. But as Neil points out, a closer look at the new releases show two trends: 1. IBM/ISS is moving down to the SMB/SME market. Clearly making products easier and better suited to a smaller customer was a driving force here. 2. MSSP or SaaS is the holy grail for them. All of these products are being made to work together and be managed by a central outsourced MSSP. IBM, like many others sees the security market for the mid-market moving to a managed model. IBM wants to move down stream from managing not only the largest networks in the world, but managing every network in the world. Network management is more than just security, but security will play an in important role in it. We are going to see IBM, HP, Verizon, etc. increasingly coming down into the SMB/SME market to offer to manage IT environments for customers. Historically this has always been like herding cats. The question is, what will make it different this time?

Related articles by Zemanta |

| Credit Limits for Security [Phillip Hallam-Baker's Web Security Blog] Posted: 29 Sep 2008 08:05 AM CDT The Consumerist has become part of my daily security reading. Frauds are often apparent to the consumer before they are apparent to the business that was compromised.

|

| Adam on CS TechCast [Emergent Chaos] Posted: 29 Sep 2008 03:36 AM CDT I did a podcast with Eric and Josh at CS Techcast. It was lots of fun, and is available now: link to the show Welcome to another CSTechcast.com podcast for IT professionals. This week we interview Adam Shostack, author of The New School of Information Security about the essentials IT organizations need to establish to really do security right. |

| Misc notes on IDS/IPS [Musings on Information Security] Posted: 28 Sep 2008 10:11 PM CDT Chris Hoff's response on his blog Rational Survivability makes me happy on two fronts. The primary reason I started this blog was to use this medium as an outlet for my ungrounded ego. The other was to participate in the Security Blogging community which was then catching up when I started this blog 2 years ago. To get a response for my musings from brilliant minds such as Mike Rothman, Alan Shimel, Chris Hoff and others, gives me immense joy. May be this a good therapy for my undiagnosed attention deficit. It does not matter if Chris is right or I am right. The outcome of IDS/IPS is all determined by random drift of market forces. There is no conspiracy to make IDS/IPS this way or that way. I would like to wrap up with a quote from Arthur Chandler : "We can tell when a technology has truly arrived when the new problems it gives rise to approach in magnitude the problem it was designed to solve". |

| Bypassing the Great Firewall of China - iaminchina.com [The Dark Visitor] Posted: 28 Sep 2008 09:37 PM CDT The iaminchina.com site has an informative article on bypassing the Great Firewall/Golden Shield. It is a how-to on using Firefox with torbutton. There are many ways to get around the GFW. Web-CGI/PHP based proxies all seem to work reasonably well (though frequently slow) and there are other anonymizer services out there such as Anonymouse and Ultrasurf. James Fallows from the Atlantic has blogged about using commercial anonymizing VPN services. |

| Essential Complexity versus Accidental Complexity [Last In - First Out] Posted: 28 Sep 2008 07:58 PM CDT This axiom by Neal Ford[1] on the 97 Things wiki:

should be etched on to the monitor of every designer/architect. The reference is to software architecture, but the axiom really applies to designing and building systems in general. The concept expressed in the axiom is really a special case of 'build the simplest system that solves the problem', and is related to the hypothesis I proposed in Availability, Complexity and the Person Factor[2]:

Over the years I've seen systems that badly violate the essential complexity rule. They've tended to be systems that were evolved over time without ever really being designed, or systems where non-technical business units hired consultants, contractors or vendors to deliver 'solutions' to their problems in an unplanned, ad-hoc manner. Other systems are ones that I built (or evolved) over time, with only a vague thought as to what I was building. The worst example that I've seen is a fairly simple application that essentially manages a lists of objects and equivalencies (i.e object 'A' is equivalent to object 'B') and allows various business units to set and modify the equivalencies. The application builds the lists of equivalencies, allows business units to update them and push them out to a web app. Because of the way that the 'solutions' were evolved over the years by the random vendors and consultants, just to run the application and data integration it requires COBOL/RDB on VMS; COBOL/Oracle/Java on Unix; SQL server/ASP/.NET on Windows; Access and Java on Windows; DCL scripts, shell scripts and DOS batch files. It's a good week when that process works. Other notable quotes from the axiom:

And

The first quote relates directly to a proposal that we have in front of us now, for a product that will cost a couple hundred grand to purchase and implement, and likely will solve a problem that we need solved. The question on the table though, is 'can the problem be solved without introducing a new multi-platform product, dedicated servers to run the product, and the associated person-effort to install, configure and manage the product?' The second quote also applies to people who like deploying shiny new technology without a business driver or long term plan. One example I'm familiar with is a virtualization project that ended up violating essential complexity. I know of an organization that deployed a 20 VM, five node VMware ESX cluster complete with every VMware option and tool, including VMotion. The new (complex) environment replaced a small number of simple, non-redundant servers. The new system was introduced without an overall design, without an availability requirement and without analysis of security, cost, complexity or maintainability. Availability decreased significantly, cost increased dramatically. The moth met the flame. Perhaps we can generalize the statement:

A more detailed look at essential complexity versus accidental complexity in the context of software development appears in The Art of Unix Programing[3].

|

| Book Review: Build Your Own Security Lab [Nicholson Security] Posted: 28 Sep 2008 07:48 PM CDT The Good I have had this book on my bookshelf for a few months and recently, due to some textbook changes in my Windows Security class, I decided to read it. The book covers the usual ground you would expect, network hardware, virtual machines and various OS and network software. The first chapter talks about getting used [...] |

| Security News Links [Nicholson Security] Posted: 28 Sep 2008 01:55 PM CDT NYC AppSec Infosec Conference Event - Summary Myths, Misconceptions, Half-Truths and Lies about Virtualization Malware Challenge begins October 1st! MindshaRE: WinDbg Introduction World’s electrical grids open to attack BSQL Hacker - Automated SQL Injection Framework After Fake Blogs Come The Fake Forums (IN)SECURE Magazine Issue 18 Fun with WiFu and Bluesniffing Maltego 2 and beyond - Part 2 Modern Exploits - Do You Still Need [...] |

| And I thought I didn't like Streisand [Emergent Chaos] Posted: 28 Sep 2008 01:19 PM CDT While Babs' vocal stylings may be an "acquired taste", today I have a new appreciation for the Streisand Effect. Thanks to Slashdot, I learned that Thomson Reuters is suing the Commonwealth of Virginia alleging that Zotero, an open-source reference-management add-on for Firefox, contains features resulting from the reverse-engineering of Endnote, a competing commercial reference management product. Turns out that while I am pretty happy with Bibdesk, it's not the perfect solution for me. I had never heard of Zotero, so I downloaded it and played around. Color me impressed. If you are looking for a browser-integrated citation and reference management tool, I'd give Zotero a look. |

| Digital Bond Turns Ten [Digital Bond] Posted: 28 Sep 2008 07:51 AM CDT Digital Bond opened our doors ten years ago today on Sept 28, 1998. Like most businesses, Digital Bond morphed over time. Gen 1 was a company designing a smart card solution to secure Internet brokerage transactions. We actually did pharming demonstrations with brokerage sites back in 1999, but we were never able to get the large brokerage beta client to get this product to take off - - of course a few bubble bursts didn’t help. We started doing security consulting to pay the bills rather than go for another round of angel/venture funding. Some of the team found they actually liked consulting more than developing products. Gen 2 was a combination security consulting / value added reseller focused on the Florida market. We did assessment, architecture, policy engagements for a lot of banks and ecommerce companies, and we also sold, installed and supported products from Checkpoint, Cisco, Network Associates, Websense, … We found the resale/install to be more trouble than it was worth and quickly moved to pure consulting. Gen 3 is where we are today, a control system security consulting and research practice. And we stumbled into that when a very large water system asset owner asked us to perform a security assessment on their SCADA system back in 2000. A bit scary looking back at that now knowing what we have learned over the past eight years. Control systems security engagements became a growing part of our business, slowly at first but then easily. Sometime in 2004 we decided to focus on control system security and since then it has been our entire business except for longtime customers in banking we still support. A few key dates in our control system security history: October 2003 - we started the SCADA Security Blog. There are now over 850 blog entries. It is funny that the second entry discusses the Modbus Hack Demo that was making the rounds in the control system events and now five years later is being shown at events like Defcon/Black Hat. March 2004 - Digital Bond received our first research contract from DHS to create IDS signatures for control system protocols. Given these signatures are in almost every commercial IDS I think DHS got there $100K’s worth. December 2006 - The SCADApedia started because we got tired of good, factual information getting aged off and buried in the blog. I know the SCADApedia has not gotten a lot of traction yet, but it is something that needs to be a certain size before the value is clear. Now with over 100 entries of increasing detail more people are using it. January 2007 - Digital Bond’s first annual SCADA Security Scientific Symposium [S4] takes place in Miami Beach with about 35 physical attendees and about 20 virtual attendees. Attendance grew by 50% in 2008, and we anticipate a sellout in 2009. S4 was created out of frustration that there was no where to present technical research to a technical audience. October 2007 - Digital Bond is awarded a Dept. of Energy research contract that is leading to the Bandolier and Portaledge projects. May 2008 - Digital Bond is awarded a DHS research contract that is leading to Quickdraw. What is missing from this highlight timeline is our consulting clients, many fantastic asset owners who always desire to avoid security publicity. I frequently say that we are blessed in that we work with people who care about control system security - - - otherwise they wouldn’t pay to hire us. They are the top 10%, the early adopters. Many of the clients have been working with us for 3, 5, and even 8 years. The progress they make from a system with many security problems to a set of effective technical and administrative controls is impressive and a credit to them. We even have some long time clients who ask us what else they should be doing, and there is nothing on the list of more value than more rigor in insuring they are effectively implementing what they have in place. Finally I would be remiss if I didn’t mention that Digital Bond has had a number of talented security professionals who are now Digital Bond alumni, and I want to publicly thank them for their hard and often brilliant work while they were with the company. The fact that they all remain close and willing to help Digital Bond, and vice versa, is a source of pride. After ten years I hope we learned something about creating and running a business. My unsolicited advice to anyone thinking about starting a company is you will have higher highs and lower lows as an entrepreneur than you can ever prepare for. Make sure you enjoy the highs and power through those lows. |

| If you build it, will they come? [Sunnet Beskerming Security Advisories] Posted: 28 Sep 2008 02:28 AM CDT Despite many people exhorting that all it takes to get online traffic is to build it, and people will come, sometimes it doesn't turn out that way, as the University of Illinois is currently finding out. Earlier this year, the University of Illinois set out to establish an online campus that would allow students to obtain degrees online, however the response has been underwhelming, to say the least. Online degree programs have always been regarded with dubiousness, however the idea of delivering degrees online is only one step removed from degrees by correspondence that many universities offer for students who work or otherwise can't attend classes full-time. Having ready access to a network connection means that coursework can include media and improved learning aids that can not really be delivered through the mail. Expecting 5,000 students by the five year mark, fewer than 150 students have taken up the opportunity with the University of Illinois since the system went online. One of the biggest problems with the University of Illinois' online campus seems to be that the whole concept relied upon University departments creating new coursework and material in order to create online degree programs. It has rapidly become apparent that there aren't too many departments with the time or interest to create new coursework for the system. This is an excellent demonstration of what can happen when you don't adequately plan for how a concept is to be implemented before actually trying to implement it. Social networks rely upon their users for most of their content and relevance, but it seems that online degree programs (at least the legitimate ones) aren't as simple to establish. Perhaps a better approach would be to have arranged with the various high demand courses to be created ahead of time and then placed online. Achieving accreditation, as suggested in the article, could help somewhat. |

| Posted: 27 Sep 2008 07:33 AM CDT A couple of months back GNUCITIZEN started House of Hackers, a social network for hackers and other like-minded people. Keep in mind that we use the word hacker in much broader context, i.e. someone who is intellectually challenged by the limitations of a system. Certainly, we do not promote criminal activities. Today the network has expanded to 5500 members. I believe that it will reach 6000 members by the end of the year. It has been a huge success so far. HoH has groups which cover almost every area of the information security field and also some unique ones such as Stock Market Hackers, Urban Explorers, Ronin, Female Hackers, Black PR, etc. So, let’s get to the point. Would you be interested in sponsoring HoH in return for placing your brand in our sponsors page which is also displayed on the front page and the sidebar for every other page? We are open to any other suggestions you may have. If you are interested get in touch with us from our contact page. |

| You are subscribed to email updates from Security Bloggers Network To stop receiving these emails, you may unsubscribe now. | Email Delivery powered by FeedBurner |

| Inbox too full? | |

| If you prefer to unsubscribe via postal mail, write to: Security Bloggers Network, c/o FeedBurner, 20 W Kinzie, 9th Floor, Chicago IL USA 60610 | |

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=a201babe-6e1e-4758-893b-749d43a4ea53)

This week Bill Brenner, senior editor at

This week Bill Brenner, senior editor at

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=06617953-2a2e-4922-b7fb-b329780543fa)

No comments:

Post a Comment